| Category |

Description |

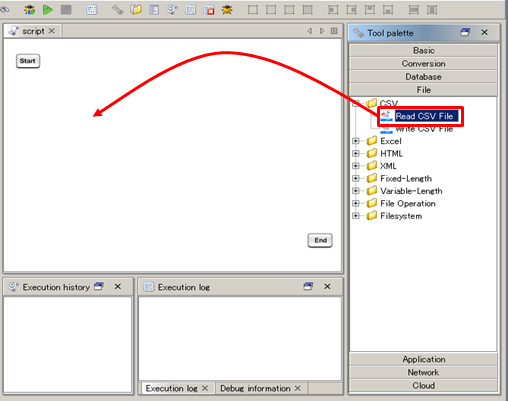

| Basic |

A category to which Validation and Assertion adapters belong. |

| Database |

A category to which adapters for connecting with RDB, XMLDB, and other databases belong. |

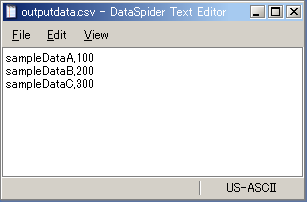

| File |

A category to which adapters for handling CSV, XML and other file formats belong. |

| Application |

A category to which adapters that interface different kinds of applications belong. It would include BI tools, Groupware, ERP, mainframe, and etc. |

| Network |

A category to which adapters that perform network operations, such as Mail and FTP belong. |

| Cloud |

A category to which adapters that interact with various cloud services belong. |

| Name |

variable scope |

Description |

Whether the value assigned is modifiable within a script |

Usage |

Example |

Remarks |

| Script variables |

Script |

Variables which are usable within from a script.

Script variables can be arbitrary defined by user. |

Yes

(Use Variable Mapper ) |

${ScriptVariableMame} |

${var} |

|

| Component variables |

Script |

Predefined variables implicitly used by declared components.

Number of rows having being processed or errors occurred during operations can be obtained through the component variables. |

No

(variables are assigned automatically) |

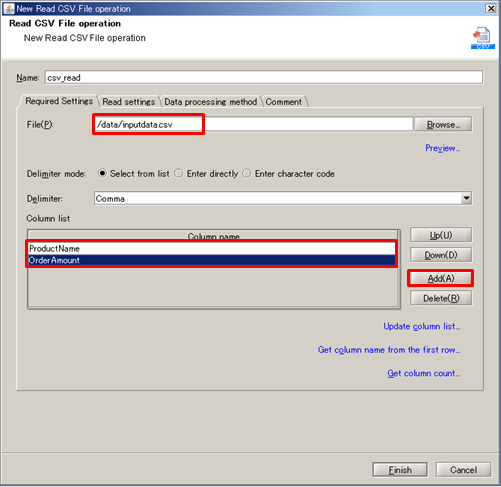

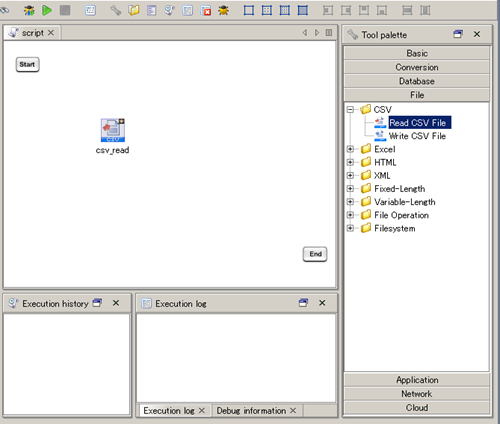

${ComponentName.ComponentVaribaleName} |

${csv_read.count} |

Initialized with its default value when component is executed. Initialized with its default value when component is executed. For more details, please refer to the help document available for each adapter For more details, please refer to the help document available for each adapter |

| Environmental variables |

DataSpiderServer |

Environmental variables can be used system wide.

Script variables can be arbitrary defined by user. |

No |

%{EnvironmentalVariableName} |

%{DB_HOST} |

|

| Trigger variables |

Trigger |

Predefined variables implicitly used by triggers. |

No |

${trigger.TriggerVariableName} |

${trigger.projectName} |

|

For further details, refer to Script variables.

For further details, refer to Script variables. Initialized with its default value when component is executed.

Initialized with its default value when component is executed. For more details, please refer to the help document available for each adapter

For more details, please refer to the help document available for each adapter For more details, refer to Managing environmental variables.

For more details, refer to Managing environmental variables. For further details, refer to Trigger Variables and trigger operation guide.

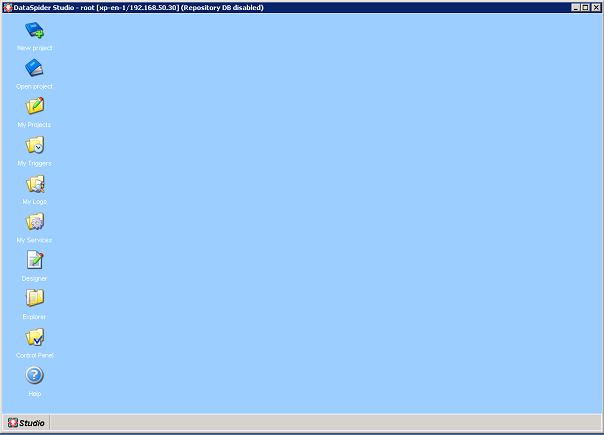

For further details, refer to Trigger Variables and trigger operation guide. User accounts and groups cannot be added if no repository database is used.

User accounts and groups cannot be added if no repository database is used.